From the Terminal

I "Rewrote" My ORM Again with AI. And Ended Up Benchmarking Every PHP ORM in the Process.

You may or may not have read my other blog posts about how

How I gamified unit testing my PHP framework and went from 0% unit test coverage to 93% in 30 days

(7 years ago!) or about how

PHP attributes are so awesome I just had to add attribute based field mapping to my ORM

I've been writing ORMs by hand for 20+ years. This isn't even the only one I've worked on. ORMs for me are like puzzles so I am not kidding when I say I think about them when I'm just quietly going about my day. So with ML I've been able to basically get to where I want to be with Divergence. If you prefer blog posts written by humans I can attest that nothing in this post is written by AI and every metric presented in this post is deterministic and readily quantifiable.

Where's the benchmark result?

Here: https://the-php-bench.technex.us/runs/1

Okay so what? How is this better than other benchmarks? It's obviously vibe coded.

I hand curated a very specific architecture for how it works rather than simply that it works. Every framework has it's own vendor. Tests run in a sub process. Tests run one at a time instead of in parallel. The benchmark collects clear information about the hardware in order to provide a clear baseline. Results are logged appropriately locally and optionally uploaded to that site I put together above. Six models were hand designed in order to stress the various database types in different ways and that shows clearly in the profile of the result set.

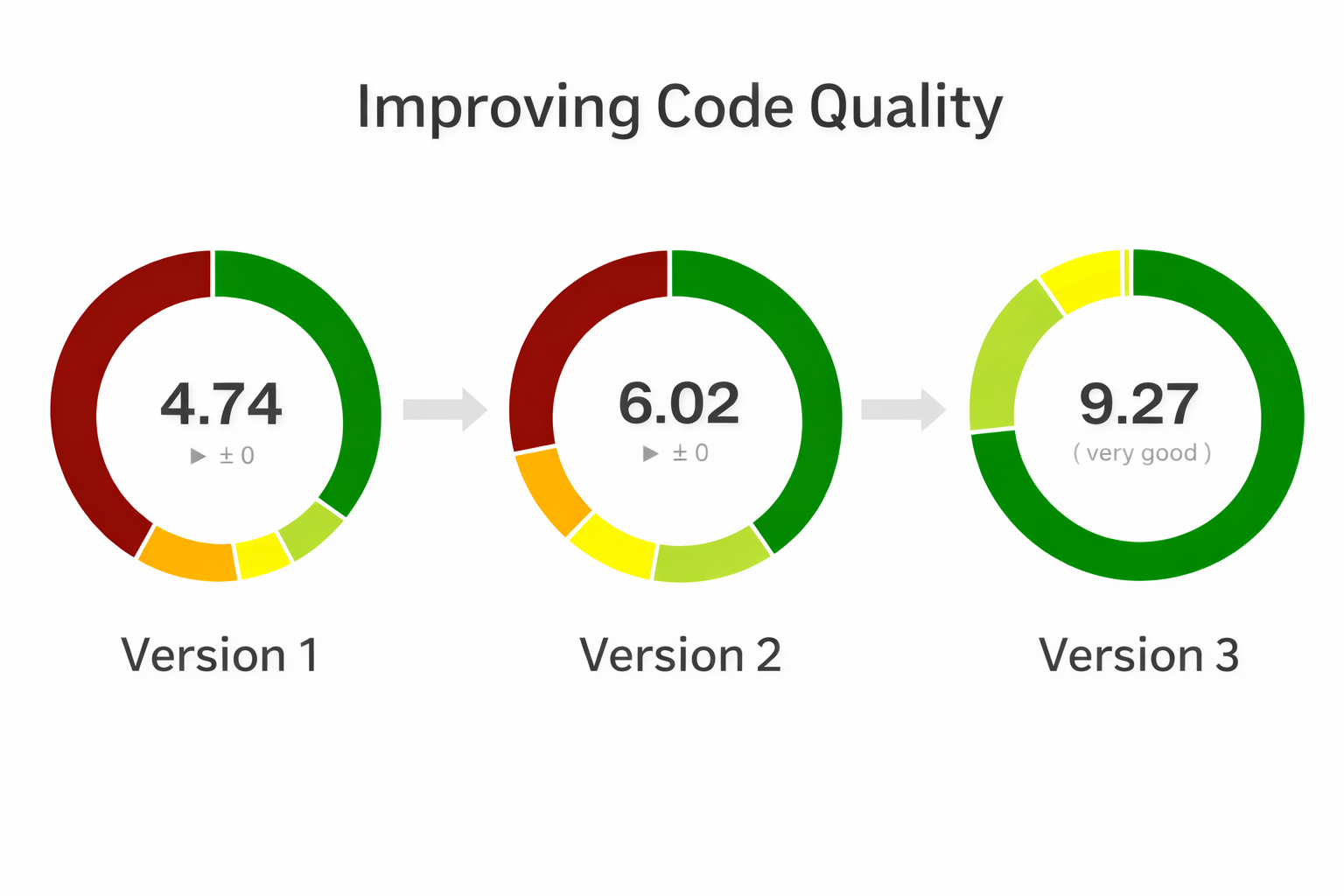

Code Quality

I tend to go into way too much detail about my ORM that no one uses so if all you care about is the benchmark results of every other framework just look at the benchmark results above. But if you care about improving code complexity read on.

Prior to version three I had already been wanting to improve code quality and was already going in that direction.

In version three I was able to find ways to improve code quality substantially but it all built on top of the work I had done by hand moving from Version 1 to 2.

Version 1 of Divergence was mostly dominated by a large ActiveRecord monolith class paired with a MySQL class. Together they were able to stand up an impressive PHP 5.x era ActiveRecord ORM with a good track record that stood up well through PHP 7.4. PHP 8 however introduced attributes and there is where I started to think critically about Code Complexity and way of breaking up the code into smaller chunks with lower complexity.

Code Complexity? What are you talking about?

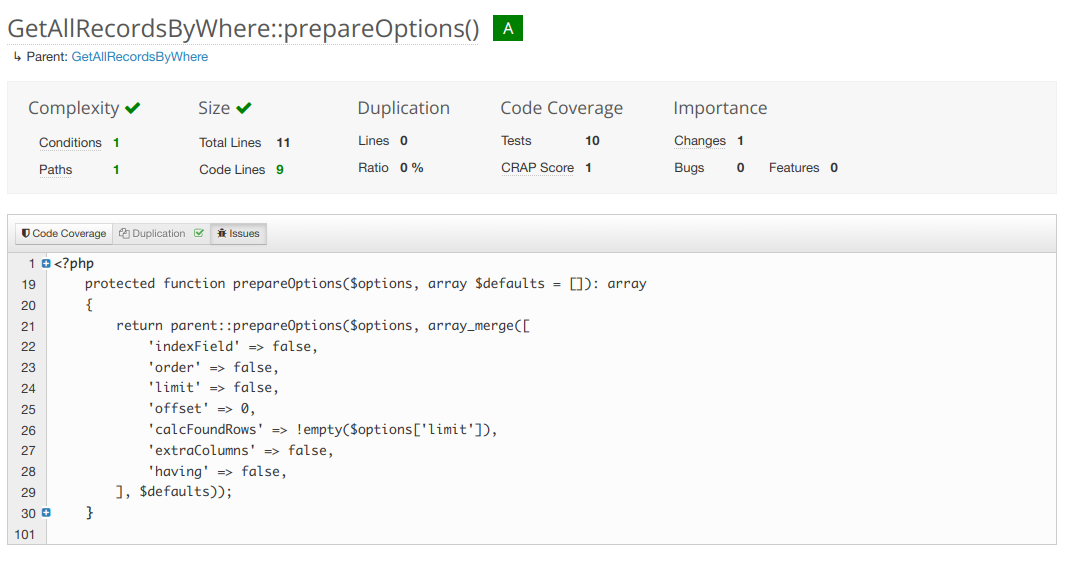

Code complexity is a measure of how many pathways your code can take. The fewer, the simpler, the easier it is to write a unit test that covers 100% of the things your function can do.

Above is a fairly simple and not very complex function.

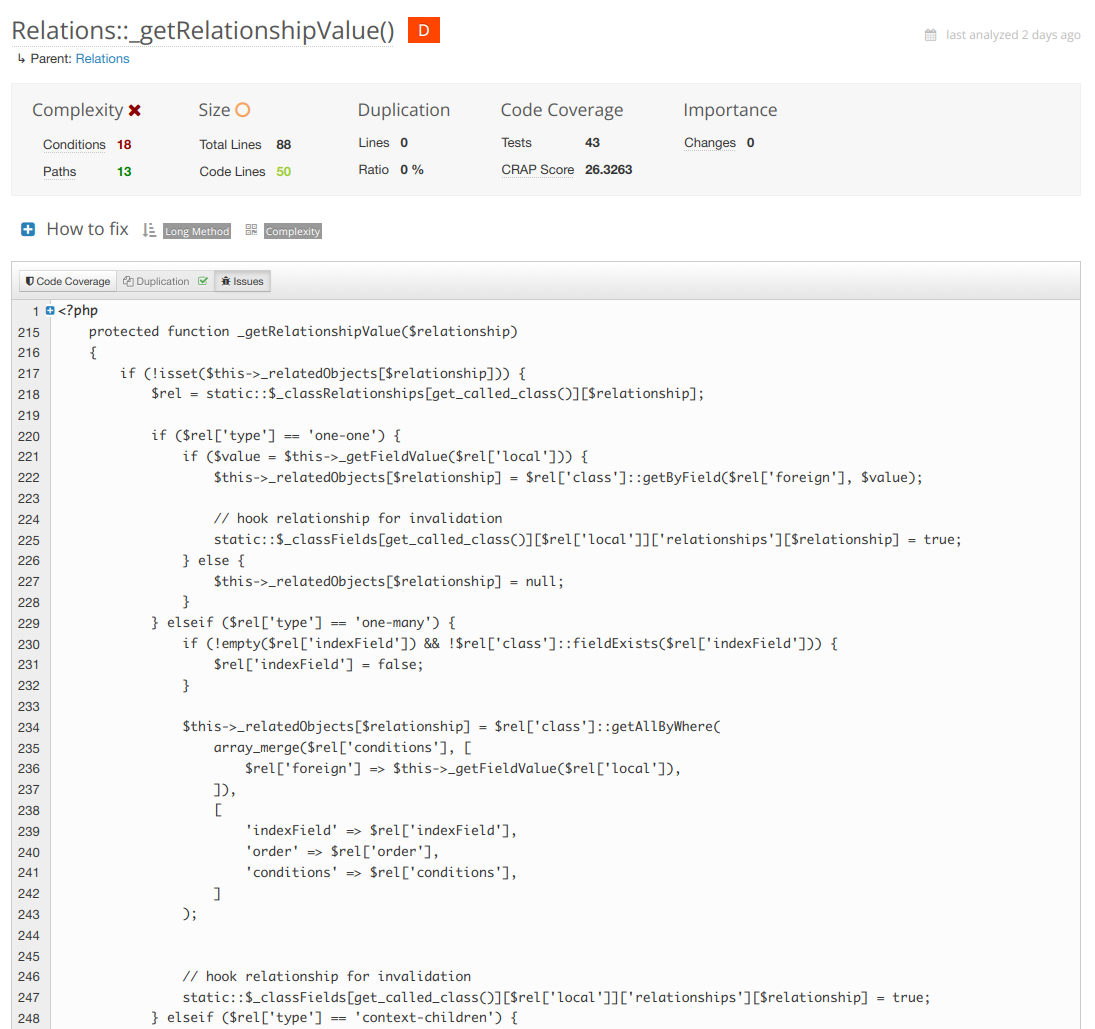

On the other side of the fence we've got the most complex function in the framework at the moment which is my next target for refactoring.

In this case the refactor is obvious. Break up each of the relationship types into smaller method calls inside the same class. Each one responsible for only it's own thing. Complexity drops. Your tests become far simpler and targeted.

Above is the worst class now... in version three. But back in version one the code was in a way worse shape with complexity.

Version 1 of Divergence - Large Monolith Classes

In 1.0 ActiveRecord was handling almost everything including mapping type values to MySQL type values so in 1.1 I introduced the "FieldSetMapper" abstraction. which allows a model to set it's own Field Mapper if necessary. A field mapper handles how types are cast between the SQL query and PHP data types. This lowered ActiveRecord's complexity in a great direction but it was still responsible for instantiating and hydrating new objects, getters, as well as the life cycle of the object. Pretty much still a monolith. Not great for code complexity.

The next thing to refactor was how queries were being written. In ActiveRecord and VersionedRecord there where only a handful of spots where a SELECT, UPDATE, INSERT, or DELETE was being generated so it was an obvious spot to start doing query writing through a class. I implemented __toString() here so all the existing string handling just keeps doing what it was doing. Tests passed no problem. At this point the ground work was laid for being able to implement other storage engines besides MySQL for the first time. This is also where I started to think of "Getters" as separate from the ActiveRecord class.

There are two types of "Getters" in ORMs. One is for getting a field, property, meta data, or relationship value internal to an object instance. The other type of "Getter" is when you want to pull records from your storage engine and have them be instantiated in your code. That second type is the one I am referring to here.

In CS-coder speak this type of Getter is essentially a factory. A place where we grab data out of the storage engine like MySQL and then shove that data into PHP objects. Getters have two parts. A query part and a hydration part. These types of Getters are broken up further into two types. Multiple records and single records. Some of them like getByWhere have a direct all version getByAllWhere. The only difference is getting one of itself to multiple of itself. So here they are. The object instantiation getters of Divergence.

| Single | Multiple |

|---|---|

getByID |

|

getByHandle |

|

getByContextObject |

getAllByContextObject |

getByContext |

getAllByContext |

getByField |

getAllByField |

getByWhere |

getAllByWhere |

getByQuery |

getAllByQuery |

getRecordByField |

|

getRecordByWhere |

getAllRecordsByWhere |

getAllByClass |

|

getAll |

|

getAllRecords |

Okay so why? Why do that?

A lot of ORMs just do this. A factory class has the getters and spits out Employee objects. Simple. Well in the best case. Something like this.

<?php

$employees = new EntityManager('Employee')->all();In Divergence I do this:

<?php

$employees = Employee::getAll();This has not changed since Version 1 either. From the predecessor of this framework Emergence to now this code is still valid. I'm not gonna say my changes haven't broken user space but they certainly did so in ways that are easy to port. When you have these getters registered as static methods under your class you get a global access to these models from anywhere in your code. They become singleton globals we can use anywhere.

In version 2 of Divergence I started to abstract them out by making them a trait you could attach to your Models. The default Class "Model" would automatically assign them so the global method calls persisted through the refactor and again no tests were harmed. Yet now they were not in ActiveRecord and made the code far less complex.

It became immediately apparent that every single function in the table above was either using instantiateRecord or instantiateRecords. After running the query the return data had to be converted to PHP objects and that's where it happened. So along with the above methods I was able to also move hydration into the Getters trait and a few other helper methods. They technically still accessed field and relationship mappers in the ActiveRecord class but hydration was still something we could move out of ActiveRecord as long as the mapping field mapping was calculated once. This is around the time when I wrote my blog post about attribute field maps in PHP 8.

At the same time I also converted my controllers to use PSR7 and PSR15 handlers and emitters. The "old school" way is to just echo, print, and run header() whenever you please in your controller. The new way is to generate an HTTP controller object, set everything, and then pass it to an "Emitter" that does the printing and http handling. To be honest that deserves it's own blog post but suffice to say it lowered complexity further. At the same time I added Media handling classes that needed heavy refactoring. But at least for the moment I was able to take the wins and get the score from a 4.7 to a 6 with these changes. Still to hit the 9 I was looking for it would take some heavy thinking on how to tackle the next iteration. And all this was BEFORE a single AI algorithm had been used.

So in version 2 I identified these goals for version 3.

- I want to support SQLite and PostgreSQL

- To do so the Connection must be aware of it's engine and be tied to a given Model's instantiation and Hydration and the Query Writer needs to know about the Connection's Storage Engine in order to write proper syntax.

- By providing a Factory class we can keep that state with the instantiation and hydration cleanly.

- Factory classes also make it easier to test things in isolation.

- Using __call we can create different types of methods that used to live in ActiveRecord that are converted to their own classes.

- Getters that used to exist in ActiveRecord can still run statically if they are registered with the Model using __call.

- What's left after removing these things from ActiveRecord is events and those can then cleanly be moved into their own classes as well.

All this abstraction sounds like a lot but we went from ActiveRecord doing manual INSERT and UPDATE query writing hardcoded MySQL syntax. Instead now when we cast from SQL to PHP and back and the system is neatly organized and any overrides can happen in the most appropriate place. Super simple to test. The query writers for each storage engine work against whatever the active connection is and only have overrides when necessary.

Should Models Even Have Getters and Setters to Get Records Of Themselves From the Storage Engine?

PHP is inherently procedural and owing to it's roots. Because it's procedural things are happening one at a time from beginning to end. When scaling you might have a read replica of the database that is used as a backup. In that case you might even run something like setConnection(). When your secondary connection is the same storage engine things are easy but what if it's a totally different type of SQL? That's basically why it's better to have a Factory or "Entity Manager". You might want to create two for the same Model for two different connections or more than that even. But...... 99% of projects won't ever need it. So I want to serve both schools of thought. And that is why in version 3 of Divergence I decided to introduce a Factory class for the first time. Now all the connection handling, storage engine SQL translation, mapping, and object hydration doesn't have to live in ActiveRecord. The thing is you can still use __call to build "fake" methods so I did just that for the Getters that used to live in ActiveRecord. So you can still use them and it will make you the Factory in the background but if you really need the abstraction you can also make the factories and manage them manually.

New Hydration Method

In version three besides the difference in where this stuff is happening I also abstracted out the way that objects are instantiated.

In Divergence 1 and 2 we did the classic late static binding.

Anyway here's version 3 of Divergence.

Honestly I'm not even ready to pin this version of Divergence 3 as "final". There's several new things I wanna add but well meh ship it. It's running this blog in any case and doing it quite well.

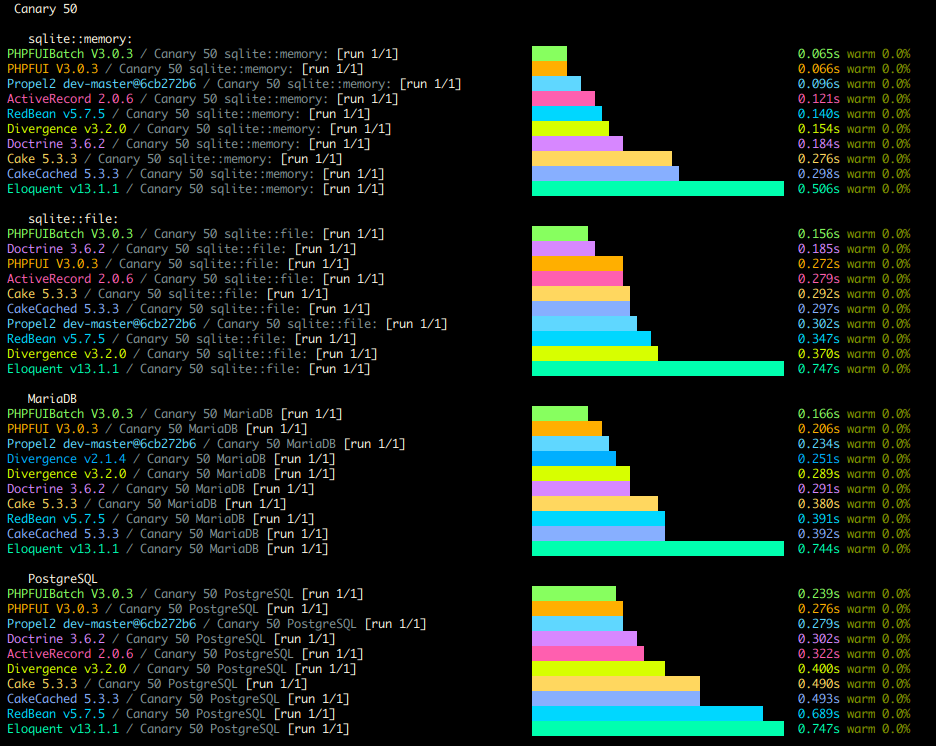

Here are my benchmarks right now

In the process of doing all this I really wanted a good profiler and test case so I ended up building a new PHP Benchmarking Suite which I call "The PHP Bench". The screenshot above is the results command doing it's thing on the CLI. But then I made a website for it where results can be viewed online.

I'm particularly proud of the architecture of this benchmark. I hand designed the Models to simulate several types of data. Canary in particular handles every type of field my framework supports. But then I also have fixed ints, variable strings, and fixed strings, among other benchmarks you can run. Every type shows a clear and distinct performance profile among the various frameworks.

Each framework gets their own composer.json and isolated vendor path that only gets required for their individual test so none of the frameworks can really interact with each other or cause conflicts. The findings were completely unexpected to me but I'm proud to say my self made ORM is among the world's best.

Now that I have a profiler and benchmark I can use my next step is to scaffold some first to save() and some first to query profilers against some of these and see if I can shave another 0.02-0.05 ms per operation. I never really thought I'd get to hit this milestone so soon. So that's nice.

I honestly feel like a dog that finally caught the truck. Now what.

Haha just kidding. There's so much I can do with this now! With agents I can just throw the docs at it. I even got a minimal context size one written up for MVPs. I've already got this blog working of course but what's next? You can't see it but I already got my admin page slightly tricked out.

I didn't even use react. I told it to use classic jquery ajax on top of vanilla javascript so it's dead simple.

So what else is there to do? I've been wanting to tackle some federated things and see how far I can get. In particular I think it would be fun to turn this blog into a federated node and implement the ActivityPub protocol.

Stuff You Can Play With

A few useful life cycle tracking variables every ORM should have

Right now I'm in the unusual position of being responsible for maintaining two different ORMs libraries. Occasionally I want to implement a feature that exists in one of them in the other. One such feature recently came up and I think this is something that all ORMs would benefit from whether or not they are custom written or maintained by the community. In fact after looking into things it looks like some of these already exist in one way or another in some of the most popular ORMs available.

Let's take a look at some of them.

- isNew - False by default. Set to true only when an object that isPhantom is saved

- isUpdated - Set to true when an object that already existed in the data store is saved.

- isPhantom - True if this object was instantiated as a brand new object and isn't yet saved.

- isDirty - False by default. Set to true only when an object has had any field change from it's state when it was instantiated.

Some of these properties might already exist in one form or another in your frameworks. They are simple to implement by simply adding them to the functions in the Model class at different points in the life cycle of the object. Most of them are extremely simple but as you'll see with isDirty consideration might get extremely complex in a hurry.

isNew

The point of isNew is to be able to tell that this object was created during this script execution but was previously saved. Before save it's obvious that an object is new by it's missing primary key. However after saving all evidence of this object being new has been erased. This property lets you know that this object was previously created by you so that if it's part of a large array you can iterate over the items and still know which objects are the "new" ones.

Let's take a look at how this might be useful.

<?php

$cars = Car::GetAll();

$car = new Car();

$car->save();

$cars[] = $car;

foreach($cars as $car) {

if($car->isNew) {

// do something special to the objects we created above even if we saved them since then

}

}

isUpdated

This one is pretty simple. This let's us know something that was already in the data store, has been updated by us since initialization.

isPhantom

Let's us know if we need to Update or Insert this model when saving the object. If Insert this gets set to false right after.

Implementation Details

Let's table isDirty for now and come back to it. For now let's take a look at some code. Here we do a pretty basic boiler plate for the variables we want and how they are manipulated later on.

__construct()

<?php

public function __construct(..., $isDirty = false, $isPhantom = null /* you can

implement these differently but the hydrator needs some way to set these

for an instantiated object */)

{

$this->_isPhantom = false; // this should be set by your model hydrator at time of hydration

$this->_isDirty = $this->_isPhantom || $isDirty;

$this->_isNew = false;

$this->_isUpdated = false;

}save()

public function save() {

if ($this->isDirty) {

// create new or update existing

if ($this->_isPhantom) {

// do insert

$this->_isPhantom = false;

$this->_isNew = true;

} else {

// do update

$this->_isUpdated = true;

}

$this->_isDirty = false; // only if you don't use a function to detect this

}

}So here we can see how it all comes together from initialization to storage. But what about isDirty? What's so complex about it? Well let's take a look.

isDirty

There's multiple ways to implement isDirty and both have positive aspects as well as drawbacks. If your ORM uses direct PHP object properties that map to fields only one is open to you anyway. Let's take a look at what I mean.

If you use an ORM where the fields are not object properties and are in-fact "not real" that means that you can actually implement this as a simple boolean.

For example:

<?php

public function __construct(...) {

$this->_isDirty = false;

}

public function __set($field,$value) {

$this->_isDirty = true;

...

}

public function save() {

...

$this->_isDirty = false;

}The best thing about this method is how light is it on the run time. You simple need one boolean worth of memory space for each object. Unfortunately this method also can't tell when the field was set back to it's original value or if a field was edited but the value remained the same as it was before. So while you may save some memory up front in your run time you might end up wasting a lot more memory resources in your database updating records that have no new data or simply slowing down batch processes sending writes to the database that it will end up wasting time. Furthermore this only works with dynamically allocated "magic" properties.

Your other option is to use a method to test each field for a change and return true or false. Okay but how? By keeping a copy of the "original" field values of course. And this is where this method starts to show it's down sides. You actually end up approximately doubling the amount of memory you need to make this happen. Plus that itself has complexity. For example do you change your original variables after a save? Unfortunately the answer is yes. Simple because you want isDirty to go back to false after running a save. Some things are free though. Any object that is a phantom is dirty by definition.

public function isDirty()

{

if ($this->isPhantom) {

return true;

}

$fields = static::getFields();

foreach ($fields as $field) {

if ($this->isFieldDirty($field)) {

return true;

}

}

return false;

}

public function isFieldDirty($field)

{

if (isset($this->_originalValues[$field])) {

if ($this->_originalValues[$field] !== $this->$field) {

return true;

} else {

return false;

}

}

return false;

}Of course you'll need to set_originalValues in your object during hydration. New objects won't start tracking this until immediately after save. It's worth noting that Laravel's Eloquent implements isDirty in a very similar way.

Closing Thoughts

Most ORMs implement these one way or another out of necessity however it would be nice if ORMS could standardize on these and perhaps start using the same terminology surrounding them. Is dirty is optional for many ORMs when it's beneficial to force a save() even if nothing has been changed simply to updated the modified at timestamp for the record in the data store. Still a well designed ORM should let you do both depending on your needs.